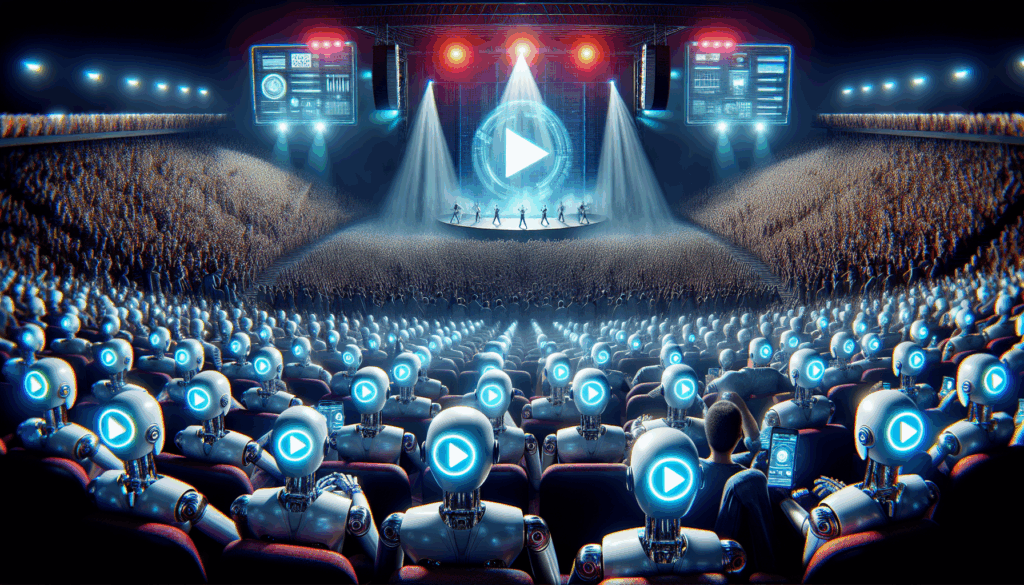

The Real Disruption Isn’t AI Music—it’s AI Listeners

Everyone’s debating synthetic songs while the real disruption floods in through the side door: scripted “fans.” Allegations of bot‑driven play inflation are turning pro‑rata royalties into an exploit, distorting charts, misallocating capital, and taxing honest artists. If a stream can be scripted, a royalty can be stolen—and the platform that can’t tell the difference becomes the exit liquidity for fraud.

Pro‑Rata Is a Bug, Not a Feature

Today’s pooled models pay on raw plays, not real attention. That invites farms to manufacture low‑quality loops, then siphon money from genuine listeners and creators. It’s not just unfair—it’s unsustainable. Markets clear on trust. When charts become counterfeit storefronts, discovery degrades, advertisers balk, and the whole flywheel slows. Fiscal responsibility here means paying for integrity, not volume theater.

Build the Moat: Verified Attention Over Catalog Size

The next defensible advantage isn’t more tracks—it’s an integrity stack. Use adversarial ML to score listen authenticity across signals: completion and dwell, session entropy, device diversity, time‑of‑day variance, skip/seek patterns, and cohort outliers. Combine that with privacy‑preserving risk scoring (edge fingerprints, no biometrics), distributor KYC, and automated clawbacks. Reward accounts with high integrity scores; throttle or fine the rest. When incentives shift, fraud math breaks.

Policy That Pays for Quality, Not Noise

Move from plays to quality engagement: only count streams that pass integrity thresholds (e.g., completion ratios, unique listeners per track/day), apply cooldowns on suspicious surges, and escrow payouts pending anomaly checks. Pilot user‑centric payouts so a listener’s subscription flows to what they actually listened to, not to whatever farm shouted loudest. Publish integrity transparency reports. Treat platform security as cost of goods sold, not a line item to defer.

Founder Playbook: Tools the Industry Will Buy Next

There’s a near‑term stack to build: listen‑integrity APIs, real‑time fraud bureaus for distributors, watermarking plus telemetry for synthetic audio, creator wallets with on‑chain (or auditable) attestations, and insurer‑backed guarantees for clean catalogs. The platform that proves it can defend attention will win artists, advertisers, and conservative capital. Music’s future won’t be the best at generating plays—it’ll be the best at verifying them.

AI Related Articles

- EU AI Act Enforcement Begins: What the New Regulatory Era Really Means for Innovation

- Google’s Gemini Pro 2 Just Changed the Game: What It Really Means

- OpenAI’s GPT-5 Turbo Just Dropped: Smarter Reasoning, Lightning Speed, and What It Really Means

- Why Apple’s Private Cloud Compute and AWS Bedrock Just Changed the Enterprise AI Game

- Open-Source AI Just Leveled Up: What Hugging Face’s Diffusion Hub and Stanford’s FALCON-X Really Mean

- Why Microsoft Copilot Tasks and the OpenAI GPT Marketplace Just Changed Everything About Agentic AI

- Google’s Gemini Ultra 2 vs Meta’s Llama 4 80B: The New AI Arms Race Just Got Interesting

- Google’s Platform 37 Shows AI Is Becoming Public Infrastructure

- UK AI Agents: Ship Fast, Govern Faster

- When Automation Works Too Well: The AI Risk That Silently Deletes Your Team’s Job Skills

- AI Code Assistants Need Provenance: Speed Is Nothing Without Traceability and Accountability

- Clouds Will Own Agentic AI: Providers Set to Capture 80% of Infrastructure Spend by 2029

- The Next Protocol War: Who Owns the Global Scale Computer?

- California Moves to Mandate Safety Standard Regulations for AI Companions by 2026

- AI Search Is Draining Publisher Clicks: What 89% CTR Drops Signal for the Open Web